Learning rate for nonstationary signals:

- The Problem

The design of an adaptive learning rate, which tracks the input signals in an on-line manner and which modifies the synaptic weights of a neural net in case of changes in signal statistics.

- Feed-forward and recurrent networks

We have proposed a learning rule for the adaptation of learning rate (in matrix and vector form) in case of feed-forward and recurrent networks.

See the paper presented at ISCAS 1996.

- Example for blind source separation

The validity of proposed learning rate adaptation for feed-forward neural networks performing blind separation is demonstrated below.

Example:

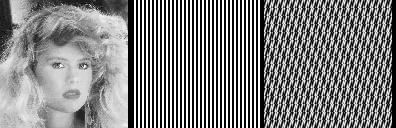

Fig. 1 Three image sources - one natural and two synthetic images.

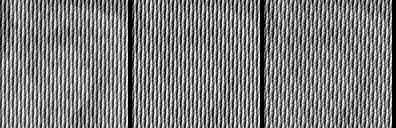

Fig. 2 It is switched twice on the input between above two mixtures. The top mixture appears during epochs 1-2 and 5-6, whereas the bottom mixture appears during epochs 3-4.

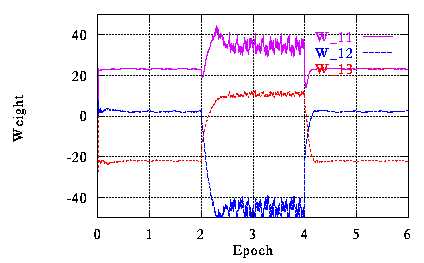

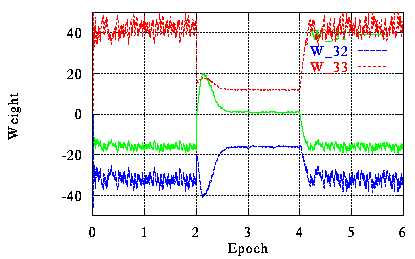

Fig. 3 The behavior of synaptic weights (3x3-matrix) during learning.

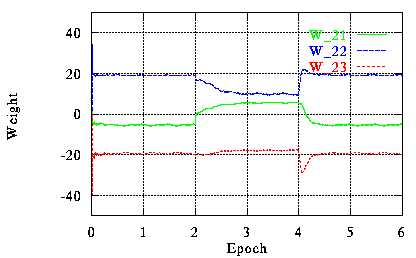

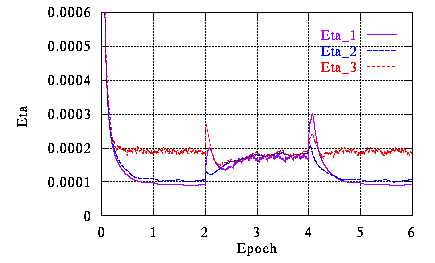

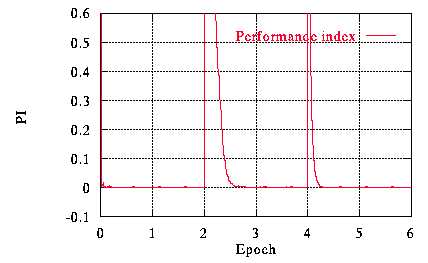

Fig. 4 The behavior of the learning rate vector Eta and the combined separation error index PI.

W. Kasprzak, June 1996